PowerShell

The Secret to PowerShell Testing for Leveled-up Teams

Unless you’re the reincarnation of some famous daredevil, you know better than to put anything into Production without testing. This includes your PowerShell scripts.

Googling around has probably yielded the same three tools over and over:

- PSScriptAnalyzer

- Pester

- WhatIf

These are great tools… if you have the PowerShell experience points (XP) to understand how to use them.

In an earlier article, we explained that you can level up your Ops team much faster by embracing different levels of PowerShell experience instead of relying on your team’s PowerShell expert. Like a video game, you’ll have some players at just 1XP (they can open the literal PowerShell and paste something into it) while others are 50,000XP superstars (they can use C# to write, test, and build cmdlets that are packages in modules).

Teams embracing the multiple levels of PowerShell XP need those at multiple XP levels to be able to participate in PowerShell testing. The tools might not be the best choice for teams embracing all skill levels; the better way to treat PowerShell testing is by using a software development lifecycle (SDLC).

To save you research time, this article will share a bit about these three popular tools before we tell you the secret to PowerShell testing for “leveled-up” teams.

Popular Tools

The three most popular PowerShell testing tools seem to be PSScriptAnalyzer, Pester, and WhatIf.

PSScriptAnalyzer

PSScriptAnalyzer is good for static analysis for PowerShell scripts and modules.

Running a set of rules based on PowerShell best practices (both from PowerShell officially and from the user community), the checker “generates DiagnosticResults (errors and warnings) to inform users about potential code defects.” It’s very much like the spellchecker in Microsoft Word, putting colored squiggles underneath anything with an issue.

It’s most appropriate for PowerShell scripts and functions, and the VSCode PowerShell extension underlines problems with green squiggles.

PSScriptAnalyzer is free and reduces the risk of finding errors later in the testing process, but it is NOT a replacement for testing.

Pester

Pester is best for unit testing and is a free and open-source project designed for testing PowerShell, written in PowerShell.

Unit testing is a type of functional testing (‘does the software do what it’s supposed to do?’) and means testing a software “unit” (a single change) in isolation, before attempting to integrate it into the larger whole. It’s like checking the date on the milk before you put it in your coffee: if the milk is expired, you’ll never put it into your drink.

Pester is most appropriate for testing PowerShell functions, which act as units that can be tested. It’s not as good for scripts, largely because it’s super hard to think about and write PowerShell scripts as “units.” It makes for awkward, difficult-to-maintain code that most PowerShell engineers can’t maintain. You may consider writing applications instead.

Pester reduces risks of bugs/regressions in code as you change it and of unexpected problems with upgrading dependencies (PowerShell version, other modules, etc.) However, this tool has a high learning curve, requiring advanced PowerShell XP (to start, we estimate 20,000XP—well into expert territory), though The Pester Book is a great resource to learn the ins and outs of the tool.

WhatIf

As Adam the Automator put it, “All compiled PowerShell cmdlets include a parameter called `WhatIf`. This parameter helps you evaluate if a command will work as you expect or if it will start a nuclear meltdown.”

WhatIf is a simulation mode that basically lets you dry-run your script and catch any consequences before pushing to production.

Functions can support WhatIf, but it’s a bit complicated. Don’t literally create a parameter called WhatIf; instead, just add SupportsShouldProcess to your CmdletBinding.

Because WhatIf is implemented at the cmdlet-level, you have to trust that the cmdlet developer implemented it properly and without bugs. And even if it’s perfectly implemented, WhatIf is NOT a replacement for true testing. For example:

del myfile.txt -WhatIfoutput `Performing the operation “Remove File” on target “c:\pathmyfile.txt”.` instead of actually deleting the file- It can’t tell you if there would be an error actually deleting the file, like permission denied or file is read-only, which you can only find out when you run the script.

The Issue?

These tools can be very helpful for catching some human errors, but they are VERY technical. They can be a great option for those with high PowerShell XP. But if you want to embrace a holistic, team-focused PowerShell approach to level up your WHOLE team, these tools will be minimally useful.

Instead, approach PowerShell testing, in the same way, your developer colleagues approach the SDLC.

PowerShell Testing for Leveled-up Teams: Follow SDLC

Why Do It:

DevOps, right? It’s Development and Operations together. DevOps is a buzzword, and it isn’t always practiced “to the letter.” (You probably do stuff your Devs don’t know about and vice versa.)

In the case of PowerShell testing, though, Ops teams can definitely take a leaf out of Dev’s book: SDLC. The software development lifecycle (or “SDLC”) is a process you enforce to produce the best-quality software at the lowest cost in time and money. The SDLC’s process stretches from inception to release.

Even if this concept is foreign to you, the good news is that, while scripts are code (and thus technically software), they aren’t nearly as much code nor should they be nearly as complex as an application. Because of this, the SDLC you create for your PowerShell scripts will be far less rigorous. But if you want to test PowerShell, you have to have some sort of SDLC in place. The most important thing at this point is taking on the mindset of SDLC, or enforcing a process for PowerShell.

An SDLC is NOT just for your Dev team and their applications. Maintaining your PowerShell scripts and modules by using an SDLC benefits your PowerShell team in the short- and long term.

What to Know:

An SDLC is appropriate for any and all PowerShell you want to test before using. This includes not just your modules but also your scripts too. The point is to have a process, no matter whether you automate your process or do it manually.

Part of SDLC can involve Unit Testing, Static Analysis, and even running tests.

Testing can’t eliminate risk. There’s no such thing as a perfect test or perfect code or “error-free” or “risk-free”; anyone promising you that is full of it.

I created a scorecard to help you calculate the risk of migrating Batch scripts to PowerShell, which triangulates three variables: script length, script complexity, and potential risk. You can control the first two, but the risk is always out of your control. Testing helps mitigate these risks, but don’t over-test so much that you’re wasting business resources. “Good enough” is usually good enough, because not every defect is actually a problem. You have to ask yourself (or your team) whether fixing the problem will be worth the investment.

What to do:

Simply, come up with your SDLC for PowerShell, then use it! Here’s a sample PowerShell SDLC:

| Stage | Questions to Ask | Purpose |

|---|---|---|

| Planning | What are the needs and requirements? What script creation/change assets will need to be created/changed alongside the script? | The only ‘perfect’ PowerShell script is one that can be easily used by people other than the author and that can be easily updated—the secret to this, of course, is clear, accurate comments, and good Comment-based Help. |

| Scripting | Is this addressing the need and requirements given? | This is the best step to use the tools we discussed above. |

| Local Testing | Does it function? | If it doesn’t work on your own machine, there’s no point in sending your PowerShell to the next stage. |

| Quality Testing | Does it meet quality standards? | Messy code with poor comments or which would be difficult to maintain should be rejected here. This is a great place for code review. (Not doing that? You probably should be.) |

| Pre-production Testing | Does its functionality meet requirements? Do requirements meet the need? Can it be safely deployed? | Just because it works doesn’t mean it’s the correct solution to the problem or that it meets stakeholders’ needs. By testing in an exact replica of your Production environment, the bugs there should be the same bugs you’d get in Production. |

| Production | Let ‘er rip! | See the Two Important Points below. |

Two Important Points About Production

- Create multiple environments (at minimum Test and Prod) configured as closely as possible to one another. Simply put, if you’re not running scripts/modules in testing environments that are (nearly) identical to your Production environment, you’re not really testing. You’re just wasting a bunch of team time.

- Do. Not. Edit. Code. Between. Environments. If you test your PowerShell in Test and then change something and put the changed PowerShell right into Prod, you’ve negated your testing work. Instead, if you have to make changes, test them again in Test before pushing to Prod. Remember: Mitigate risk where you can, even if you can’t eliminate it.

Bonus Advice: Not Everything Needs to Be a Script

“Duh.” But sometimes we all forget even obvious stuff.

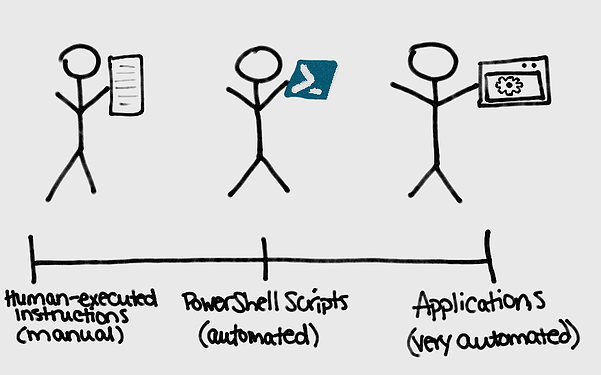

Consider your code assets on a spectrum from “instructions performed by a human” to “scripts” to “applications.” Think of configuring a domain controller: it happens so rarely that automating it doesn’t make sense. Instead, just make it a set of instructions performed by a human operator.

Embracing multiple PowerShell skill levels means anyone can just jump in, largely because PowerShell is so powerful. But high-XP PowerShell superstars often go bananas creating amazing feats of PowerShell skill—which is later inaccessible to those with lower PowerShell XP.

A word to the wise: Just because it can be PowerShell doesn’t mean it always should be. If you can’t think of a way to test your script, then it probably shouldn’t be a script run by a computer. If you can think of a way to test it, make sure it’s following your PowerShell SDLC to maximize efficiency and quality.

This is just the tip of the iceberg for all things PowerShell. For many more ways to give your PowerShell scripts and modules a boost, take a look at our free eBook, “Ultimate Powershell Levelup Guide”. Sign up for your copy today!